Recently our TFS Database size has peaked at over 570GB. Granted we do have a lot of people working against it and use it fully for Source Control, Work Items and Builds. We used to have this problem with 10s of GB being added each week. The cause then on TFS 2010 was the Test Attachments and a run of the Test Attachment Cleaner would clean it up. Kinda. We found after a while although the tables were reporting smaller, we needed two SQL Server hotfixes to allow the space to actually be freed. After that though 100s of GB flowed free and things were good. These details are covered well in a post by Anutthara Bharadwaj.

We continued running the tool and then upgraded to TFS 2012 and were told (TFS 2010 SP1 Hotfix) the problem had now gone away. We stopped running the test attachment cleaner and later upgraded to where we are now on TFS 2013.

This year however our system administrator noticed we were running out of space again. However, looking at the Disk Usage By Top Tables report the tbl_Attachment table was not the problem. It was the tbl_Content table.

From Grant Holliday’s post he tells us that the Content table is the versioned files. In the forums there is this,

“If you have binary files, the deltafication of the files will add size to the table. For example, you might have 15 binary files and 1000 changes to the files – all that data needs to be stored somewhere.”

This got me to check out my source into a clean workspace and running Space Sniffer against it to spot if anything big had been added. Our entire source is about 50 GB. Which sounds like the total size isn’t too far off. But Main is only 820 MB and the whole team is working on there. We have been doing lots and compared to a 4 months ago it was 730 MB. We have many branches but that should only be less than a GB per branch and being a delta it should be next to nothing. Checking the tbl_Content table itself showed that the biggest rows were years old and no new large binaries have been added.

I then came across the comprehensive post by Terje Sandstrom. The also contained some queries for TFS 2012 to determine the attachment content size. Here’s where it doesn’t make sense. The report table size from the disk usage report, does not match up, whatsoever, against what these queries returned. And the query from Grant Holliday showing monthly usage again show huge amounts of data (60GB per month) in a table that is 680MB. Who is correct, SQL Server reports or table queries?

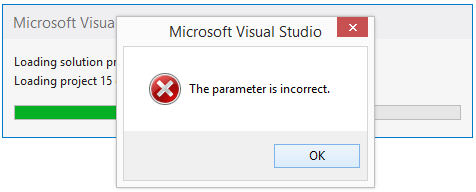

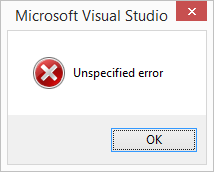

I then ran the Test Attachment Cleaner in preview mode, and sure enough it said it was cleaning up GBs of files. So I ran a Delete against the TFS database. While the cleaner was running the queries were showing the size in those tables dropping, and the Disk Usage report was showing drops in the tbl_Attachment table, albeit at a much much smaller scale. The total database size however was unchanged and the available space was getting less! On completion it said: Total size of attachments: 206030.5 MB!

The size reported by the database properties when I began was:

After cleaning apparently 200GB it is now:

Another suggestion was to delete old workspaces, which we have done. To my surprise this released about 5 GB from the content table. Git TFS can create a lot of workspaces.

Hence the problem. We are growing at up to about 5 GB per day. Our System Administrator is doing an awesome job keeping up. But we need to know if this is to be expected to continue or if there is something to tell us how we can use less space.

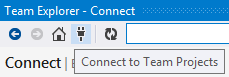

Update 3 April 2014: I have put the question on the forums.

Update 4 April 2014: Something has happened overnight. I’m assuming the workspace clean has caused it. We now have 115 GB space available! What’s odd though is that the tbl_Content size has dropped that space. What does that table have to do with workspaces? Some insights into how this is working so we can manage our systems would be appreciated.

Update 7 April 2014: More space has flowed free over the weekend without doing anything else. A shrink is in progress. Pretty crazy that 34% of the content size was local workspaces?

Update 8 April 2014: Shrink complete. Looking much better now. Notice however that the tbl_Content table in a day has gone up a few gigabytes, which is quite concerning.

Update 11 Apr 2014: By the end of the week, we have consumed around 5 GB in 3 days. I don’t see any significant amount of new workspaces created either. Unless the build server with TFS Service is creating them. I’m going to clean up TFS Service’s workspaces at the risk of breaking some builds so that I can monitor them carefully. I now have 51 server workspaces for TFS Service. Which does seem like a lot but we do have 18 Build Agents and many build definitions.

Update 14 Apr 2014: I don’t know what has happened Friday and over the weekend. The content has grown 2 GB but the database expanded a massive 60 GB! Event though the autogrowth is set to 1%. So a shrink is in progress.

Update 14 Apr 2014 #2: After the shrink it is back to the 2 GB growth for Friday and the weekend.

Update 16 Apr 2014: A couple of days growth.

Update 22 Apr 2014: Over the long weekend, it has done exactly the same thing. Another 60 GB expanded to. I’m not going to shrink this time. There is now 54 server workspaces for TFS Service, only 3 more than 11 days ago, so nothing extreme there. The growth of the Data in the tbl_Content from the 7th April has gone from 282,901,304 KB to 301,318,640 KB; 17.5 Gigabytes. Taking out weekends and public holidays that is almost 2 GB a day.

Update 12 May 2014: Usage has gone up consistently over the last couple of weeks. We have been shrinking regularly as it keeps expanding inappropriately. Here’s the current state:

I then disabled the CodeIndex and ran the delete commands as Anthony Diaz suggested in the comments:

It has removed about 500,000 records from the content table but only about 2 GB of data. We’ll see how it does for daily growth.

![]()